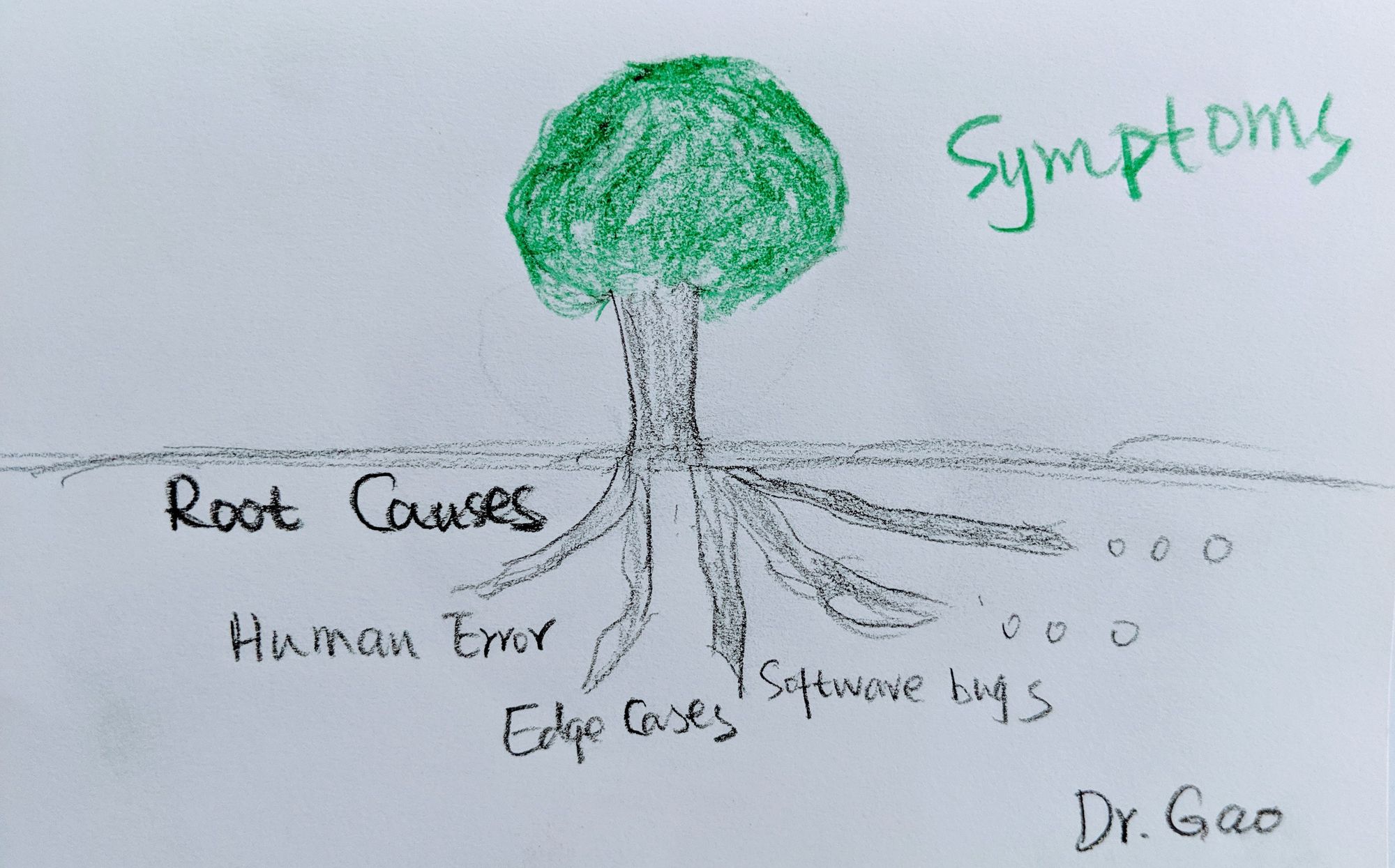

What you easily see are usually consequences or symptoms, but not the root cause.

“The Dev environment is down!!!” We heard louder screams from developers when the busy dev env was down than when the production environment was down. We dropped whatever we were doing and wanted to stop those screams as fast as we can. We pushed an app and saw the following logs:

[cell/o] Creating container for app xx, container successfully created[cell/o] ERR Downloading Failed

[cell/0] OUT cell-xxxxx stopping instance, destroying the container

[api/0] OUT process crushed with type: "web"

[api/0] OUT app instance exited

If you care about more about what is root cause than the process of figuring it out, click here.

It told us that “Downloading Failed”, but it will never directly tell us what failed to download. With some knowledge of how an app is pushed, staged, and run, we were easily able to guess that it was the droplet download that had failed. Because the next step would be the cell getting the droplet, and then running the app in the container it created if everything worked as expected. However, we still did not know what was the root cause of “Downloading Failed”.

That is where the fun comes from, that is our chance to feel smart again, by figuring it out! 🙂

We ran “bosh ssh” to the cell node and looked at the logs, bad tls showed up in the log entries. With this bad tls information, we knew that the certificates had some issues. Unfortunately, the logs will never tell you exactly which certificates are the problematic ones.

In our case, we use the safe cli tool to manage all of the certificates which were stored in Vault. safe has a command “safe x509 validate [path to the cert]” which we can use to inspect and validate certificates. With a simple script, we looped through all of the certificates used in the misbehaving CF environment with the “safe validate”command.

The output told us that the following certificates were expired (root cause!!!)

api/cf_networking/policy_server_internal/server_cert

syslogger/scalablesyslog/adapter/tls/cert

syslogger/scalablesyslog/adapter_rlp/tls/cert

loggregator_trafficcontroller/reverse_log_proxy/loggregator/tls/reverse_log_proxy/cert

bbs/silk-controller/cf_networking/silk_controller/server_cert

bbs/silk-controller/cf_networking/silk_daemon/server_cert

bbs/locket/tls/cert

diego/scheduler/scalablesyslog/scheduler/tls/api/cert

diego/scheduler/scalablesyslog/scheduler/tls/client/cert

cell/rep/diego/rep/server_cert

cell/rep/tls/cert

cell/vxlan-policy-agent/cf_networking/vxlan_policy_agent/client_cert

cell/silk-daemon/cf_networking/silk_daemon/client_cert

If you are not using safe, you can also use the openssl command or other such commands to view the dates for certificates.

$ openssl x509 -noout -dates -in cert_file

notBefore=Jul 13 22:25:49 2018 GMT

notAfter=Jul 12 22:25:49 2019 GMT

We then ran “safe x509 renew” against all of the expired certificates. After double checking that all of the expired certificates were successfully renewed, we then redeployed the CF in order to update the certificates.

The redeployment went well, for the most part, except for when it came to the cell instances, it hung at the first one forever. We then tried “bosh redeploy” using the “–skip-drain” flag, unfortunately, this did not solve our issue. We next observed that the certificates on the bbs node had been successfully updated to the new ones, while the certificates on the cell nodes were still the old ones. hmm… so this would mean that the bbs and cell nodes could not talk to each other.

Everyone needs a little help sometimes, so does BOSH.

Without digging further into what exactly was making the cell updates hang forever, we decided to give BOSH a little help. We ran “bosh ssh” to the cell that was hanging, and replaced all of the expired certificates in the config files manually, and then ran “monit restart all” on the cell. This helped to nudge the “bosh redeploy” into moving forward happily. We got a happy running dev CF back and the world finally quieted down.

The story should never end here, because a good engineer will always try to fix the problem before it becomes a real issue.

Our awesome coworker Tom Mitchell wrote Doomsday.

Doomsday is a server (and also a CLI) which can be configured to track certificates from different storage backends (Vault, Credhub, Pivotal Ops Manager, or actual websites) and provide a tidy view into when certificates will expire.

Deploy Doomsday, rotate your certs before they expire, and live a happier life!